Polyglot LLM: Insights from Using Multiple LLMs

- Applying polyglot programming philosophy to AI coding lets you leverage each LLM's strengths where it matters most

- Claude excels at complex reasoning and code quality, Gemini at speed and multimodality, Codex at automated test execution

- Relying on a single model risks context loops and vendor lock-in, while multi-LLM provides cross-validation and cost optimization benefits

The word "polyglot" comes from Greek, meaning "multiple languages." Polyglot programming is the practice of not limiting yourself to one language, and instead choosing the best tool for each task. I first encountered this concept through 임백준 (Baekjoon Lim)'s book 폴리글랏 프로그래밍. The term was originally coined by Neal Ford of ThoughtWorks in a 2006 blog post. The core idea is simple — if Python, Rust, and JavaScript each excel in different areas, why not use all three?

Edsger Dijkstra, famous for the shortest path algorithm, once said that teaching good programming to students first exposed to BASIC is virtually impossible. While extreme, it illustrates how being stuck in a single language can limit your thinking.

Polyglot programmers often say:

"I'm not a Java developer. I'm just a developer. Language is just a tool."

Developers who know multiple languages don't get trapped in language-specific frameworks when solving problems. Just like someone who only thought in OOP gains a completely new perspective on code after learning functional programming.

AI Coding Needs Polyglot Too

Let's take this one step further. You can apply the polyglot concept directly to AI coding. In 2026, frontier LLMs each have distinct strengths. And with open-source models like MiniMax-M2 and Kimi-K2 achieving frontier-level performance, the options have exploded.

Sticking to one LLM is essentially the same problem as trying to solve everything with Python. Here's a breakdown of each model's characteristics:

Claude — Excels at complex reasoning and code quality. Pays attention to type safety, naming conventions, code structure. Its long context handling makes it suitable for large codebase work. Especially shines in debugging and refactoring tasks that require thorough analysis.

Gemini — Dominates in speed, context window, and multimodal capabilities. Its massive 1 million token context window combined with strong vision understanding achieves top rankings on MMMU benchmarks. Especially strong in frontend/UI development — it took #1 on WebDev Arena, producing visually polished web apps with impressive E2E-level interactive UIs in one go.

Codex — OpenAI's asynchronous coding agent. Writes code and automatically runs tests in an isolated sandbox environment. Highly versatile, handling almost any framework and library, showing different approaches from Claude Code in complex refactoring and multi-file work.

Open-source LLMs — MiniMax-M2 scored 69.4% on SWE-bench Verified, approaching frontier model performance, while Kimi-K2 shows strength in agentic tasks. MIT-licensed and freely customizable — one of the most practical choices when considering cost-to-performance ratio.

Why Multi-LLM Beats Single LLM

Just like polyglot programming means using the right tool for the right job, polyglot AI coding follows the same principle.

Wider thinking. Russian has two words for blue, and some African languages don't distinguish between green and blue. Like how language shapes how we perceive the world, seeing different LLM outputs reveals different approaches to the same problem.

Avoid vendor lock-in. During the major OpenAI outage in January 2025, the entire service went down. "Bad Gateway," "503" errors flooded in, rendering both web and app unusable for hours. Relying on a single model stops your work completely. Being familiar with multiple models lets you switch within hours.

Cost optimization. You don't need premium models for every task. Use lightweight models for simple boilerplate generation, top-tier models for complex architecture design. Actually, most tasks (~80%) are handled fine by mid-tier models, with premium needed for only about 20%.

Cross-validation. One LLM can confidently give wrong answers. Asking the same question to different models and comparing results catches hallucinations. This cross-validation is essential in sensitive domains like healthcare or finance.

Breaking through stuck problems. Personally experienced this — sometimes model A keeps failing to fix the same bug, looping in the same direction no matter how you change the context. Switching to model B and starting fresh often solves it with a completely different approach.

For example, a complex issue that wouldn't resolve no matter how much I tried with Claude was immediately fixed when handing it to Codex from scratch — and vice versa. This is because each model has learned different patterns and problem-solving strategies. What one model gets stuck on in a local minimum, another can break free from.

Real-World Polyglot AI Coding Workflow

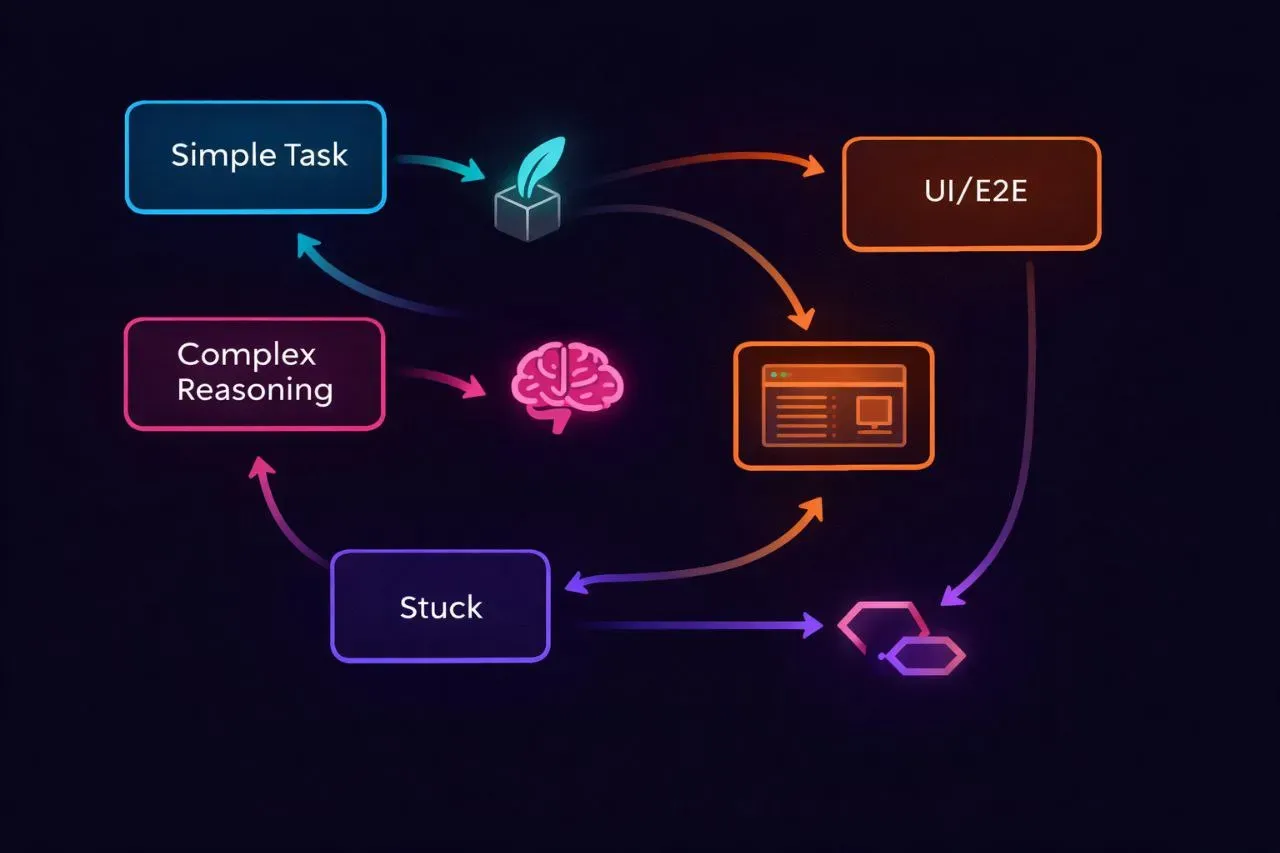

Many developers are already using each LLM for different purposes. Here's my personal combination:

Automation/repetitive tasks — Using open-source models like Kimi, MiniMax in OpenClaw. Cost-effective for lightweight tasks, but switching to Opus for complex work or initial setup. Kimi and MiniMax sometimes mix in Chinese in outputs for difficult tasks, so I switch based on the situation. (Very subjective, based on OpenClaw test messages)

Documentation and core development — Running Opus 4.6 Agent Team. Best at thoroughly covering edge cases in complex logic.

Hard problems — Deploying top-tier models like Opus 4.6 or Gemini 3.0. The key is choosing the model based on difficulty.

When hitting Opus token limits — Switching to Codex. Sometimes using the app, sometimes CLI, switching based on mood.

E2E testing/UI — Gemini is definitely stronger here. Better results in frontend completeness and interaction testing compared to other models.

The core principle is asking "which model fits this task?" not "which model is the best?" — same reason a polyglot programmer doesn't ask "which language is the best?"

Conclusion

It's good to become proficient in one LLM, but stopping there is a mistake. Even as new models like Gemini 3.1 come out and dominate benchmark scores, many developers still say "Opus is still the best" — but benchmark scores and real-world feel are different issues. Ultimately, the ability to directly understand each model's strengths and weaknesses, and switch accordingly, will be the core skill for AI coding in 2026.

In the end, the best tool isn't a single tool — it's the eye for choosing tools. And to develop that eye, you have to try multiple ones.

Refs

- 임백준 - 폴리글랏 프로그래밍 (서평)

- TechTarget - What is Polyglot Programming?

- STX Next - Polyglot Programming and the Benefits of Mastering Several Languages

- PolyglotProgramming.com

- Google - Gemini 2.5 Pro: even better coding performance

- Google - Gemini 3 소개

- VentureBeat - MiniMax-M2 is the new king of open source LLMs

- PlayCode - ChatGPT vs Claude vs Gemini for Coding 2026

- Workstation - Best LLM Models Comparison Guide

- TeamAI - Why You Should Use Multiple Large Language Models