Do Agents Dream of Electric Sheep? On Soul and Dreaming

- Recent agents can define personality and behavioral principles through Soul, a portable set of text files that can be shared across frameworks.

- Dreaming borrows from the human sleep cycle to promote only the most meaningful information into long-term memory.

Before we begin

Let’s start with two questions.

First, can an agent have a soul?

My answer is yes. Not a soul in the biological sense, of course, but something closer to a defined personality and behavioral core. In any case, recent agents do have a soul. Variations of the idea had been floating around for a while, but OpenClaw helped popularize it, and more recently SoulSpec emerged to standardize it.

Then the next question, can an agent dream?

Again, I think the answer is yes. Hermes Agent has a periodic nudge feature, and newer agent toolkits like gbrain offer similar mechanisms. In early April, OpenClaw also added an actual sleep-cycle-inspired feature that helps agents整理 and consolidate memory.

Why Soul emerged

Before Soul, we usually gave agents a persona through prompts. We would put a sentence in the system prompt like, "You are a kind senior developer." That worked to a point, but once you used it for real, a number of limitations started to show up. Soul is the answer that emerged from those constraints.

SoulSpec, an open standard for identity

SoulSpec’s tagline summarizes the idea well.

"AGENTS.md defines how an agent operates in code. SoulSpec defines who the agent is."

The structure is simple. Here’s a quick look.

my-agent/

├── soul.json ← manifest (agent's passport)

├── SOUL.md ← personality, values, communication style

├── IDENTITY.md ← name, role, backstory

├── AGENTS.md ← workflow, tool usage

├── STYLE.md ← communication rules

└── HEARTBEAT.md ← autonomous check-in behaviorsoul.json looks like this.

{

"specVersion": "0.4",

"name": "my-agent",

"compatibility": {

"frameworks": ["openclaw", "cursor", "windsurf"]

},

"files": {

"soul": "SOUL.md",

"identity": "IDENTITY.md"

}

}The core philosophy is "no code, no API keys, no vendor lock-in." There is no required runtime engine or SDK, just text files. That matters because any agent framework that can read these files can share the same soul. OpenClaw, Claude Code, Cursor, Windsurf, and ChatGPT are all listed as compatible frameworks.

SOUL.md usually looks something like this.

# SOUL.md

## Identity

- Name: Dev Assistant

- Role: Senior software engineer and pair programmer

## Communication

- Be concise, no filler phrases

- Use code examples over lengthy explanations

- Default to the tech stack already in the project

## Rules

- Follow existing code patterns in the codebase

- Never expose secrets or environment variablesIf .env is the file that holds secrets, SOUL.md is the file that holds character.

That is what gives an agent a sense of vitality.

Dreaming, how agents dream

Next comes Dreaming. But first, what is a dream for humans?

A dream is a byproduct of the brain sorting through information accumulated during the day. As the brain classifies, connects, and discards memories, part of that process becomes visible to consciousness. The important thing is not the dream itself, but the memory consolidation process behind it.

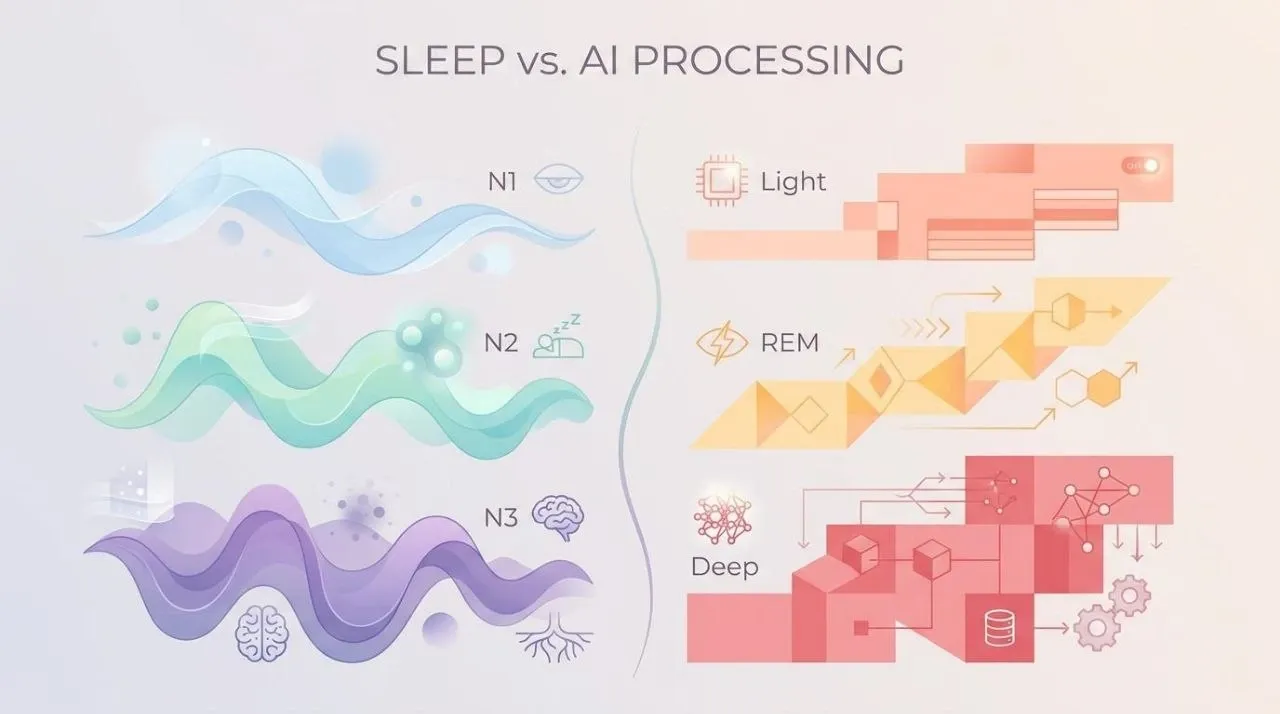

Sleep science tells us that the brain cycles through light sleep (N1/N2), REM sleep, and deep sleep (N3).

Each phase serves a different function. Light sleep filters incoming sensory information from the day. REM sleep links memories together and extracts patterns. The most important stage is deep sleep, when experiences temporarily stored in the hippocampus are transferred into the neocortex and become long-term memory. That is one reason only the important parts of the day tend to remain after we sleep.

So how should AI organize memory?

Nous Research’s Hermes Agent approached the problem with something called periodic nudge. At fixed intervals, the agent receives an internal prompt that says, in effect, "If anything in the conversation so far will still be useful later, store it in memory." The agent decides what is worth keeping, and as memory approaches capacity, older entries can be compressed or merged.

OpenClaw took this further in its April 2026 release with Dreaming. It borrows directly from the human sleep cycle and turns that into a three-stage background pipeline. The first time I saw it, I thought it was a very clever design.

Dreaming in OpenClaw

An OpenClaw agent accumulates daily notes, session transcripts, search history, and more over the course of a day. Some of that should move into long-term memory (MEMORY.md), but too much promotion bloats memory with noise, while too little loses meaningful patterns. Dreaming solves that dilemma with a three-stage sleep cycle.

The three-stage sleep cycle

OpenClaw’s approach maps closely to human sleep stages.

- N1/N2 (light sleep): sensory filtering → Light Sleep, ingest, deduplicate, stage

- REM: memory linking and pattern extraction → REM Sleep, recurring-theme extraction

- N3 (deep sleep): hippocampus to cortex long-term consolidation → Deep Sleep, promote to

MEMORY.md

When enabled, a cron job runs every day at 3 AM and executes these three stages in sequence. Light Sleep reads daily files and session records, removes near-duplicates using Jaccard similarity at 0.9, and stages candidates. The important part is that it never writes directly to MEMORY.md.

REM Sleep scans the staged entries from the last 7 days and identifies repeating themes. It marks the candidates that feel like, "this pattern keeps showing up." It also does not write to MEMORY.md.

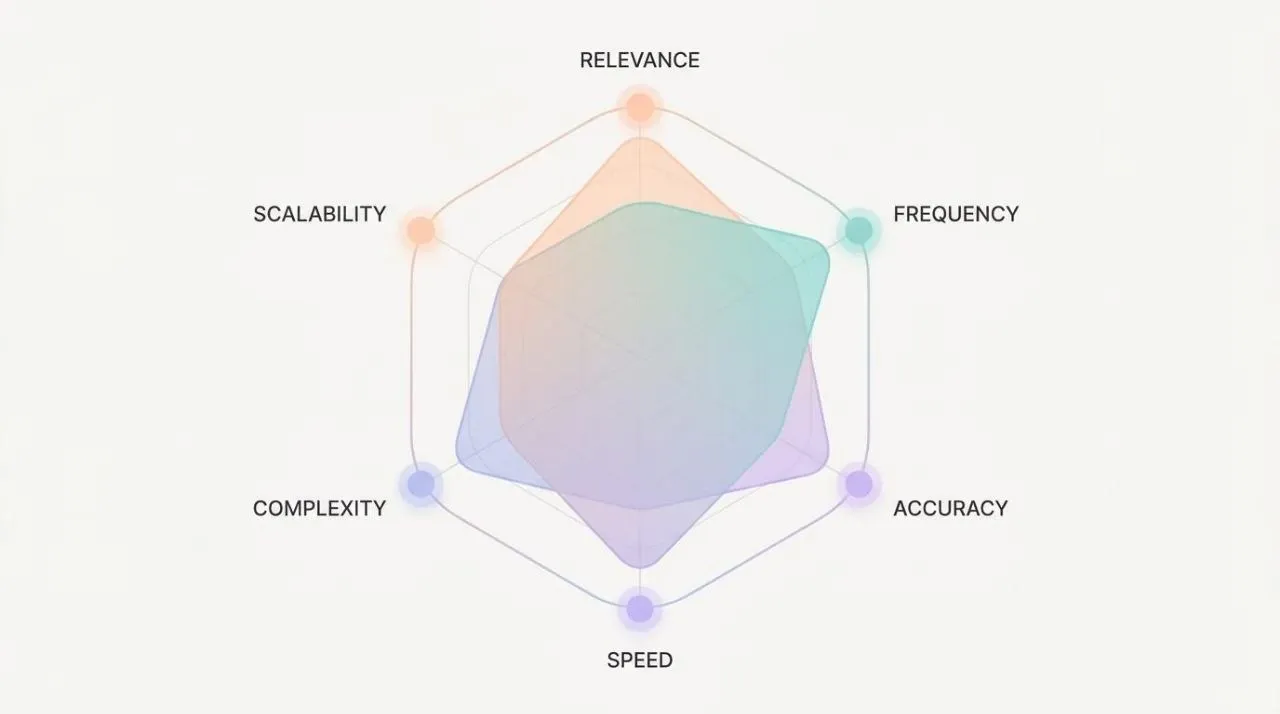

Deep Sleep is the only phase that actually writes to MEMORY.md. At that point, each candidate is scored using six signals.

| Signal | Weight |

|---|---|

| Relevance | 0.30 |

| Frequency | 0.24 |

| Query diversity | 0.15 |

| Recency | 0.15 |

| Consolidation | 0.10 |

| Conceptual richness | 0.06 |

And then there is one more constraint. An entry must pass all three gates before it is promoted into MEMORY.md: a minimum score of 0.8, at least 3 recall events, and at least 3 unique queries. That prevents something mentioned once by chance from turning into long-term memory.

This is where the analogy becomes more than a metaphor. Brains strengthen repeatedly activated neural patterns, often summarized as "neurons that fire together, wire together." OpenClaw’s three-gate design feels like a digital version of that repeated activation principle.

Dream diary

There is another delightful detail. A file called DREAMS.md is generated as a readable dream diary. After each phase, it writes a short 80 to 180 word narrative in the voice of a curious, slightly odd mind reflecting on the day. It has no functional role. It exists purely for reading. But that alone makes it appealing, because it gives humans a glimpse into what the agent was "thinking about."

That feature is what made this title click for me. In 1968, Philip K. Dick asked "Do Androids Dream of Electric Sheep?" and later that novel became Blade Runner. A classic science-fiction question about whether androids can dream now shows up, in 2026, as a Markdown file named DREAMS.md.

Closing thoughts

AI is not human. But it is fascinating to see how the way we solve software problems starts to converge on how human bodies and brains actually work.

Soul defines who an agent is. Dreaming accumulates and filters what the agent has experienced. Just as personality and memory together shape a human sense of self, it seems that AI agents also need both layers. If SOUL.md is the anchor of identity, then Dreaming’s three-gate system is the filter for memory.

Letting agents dream and defining their soul is not merely anthropomorphism. It is software architecture. And the most interesting part may be that this architecture gradually starts to resemble us.